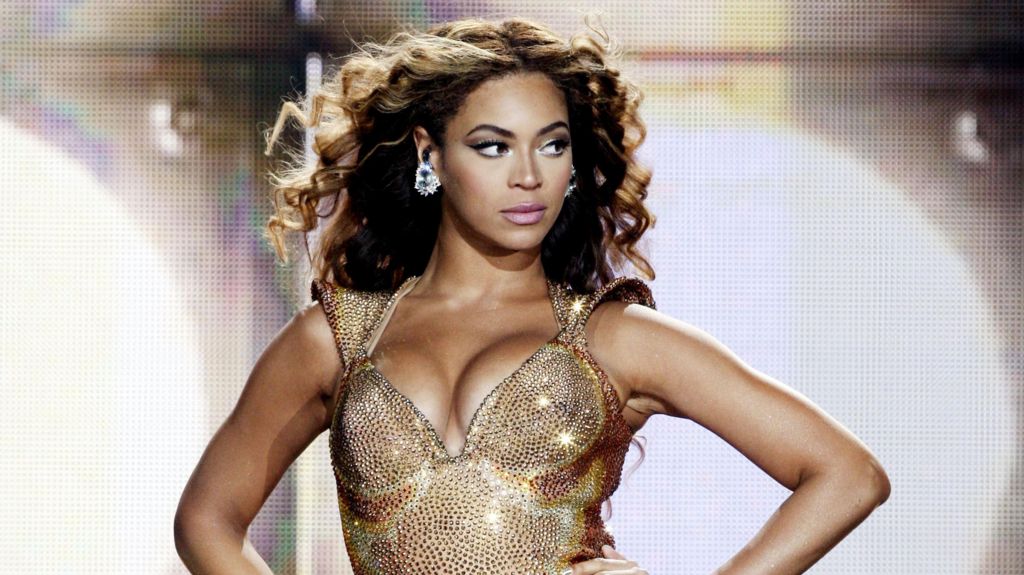

Sony Music has initiated a massive takedown campaign targeting over 135,000 AI-generated deepfake songs fraudulently impersonating its top artists on streaming platforms. The music conglomerate revealed that sophisticated generative AI technology has been weaponized to create counterfeit tracks featuring unauthorized vocal clones of global superstars including Beyoncé, Harry Styles, Queen, Bad Bunny, Miley Cyrus, and Mark Ronson.

According to Dennis Kooker, President of Sony’s Global Digital Business, these AI forgeries represent a calculated commercial threat that directly harms legitimate artists—particularly during critical album promotion cycles. “In the worst cases, they potentially damage a release campaign or tarnish the artist’s reputation,” Kooker stated, emphasizing that deepfakes exploit artist-driven demand while undermining their creative objectives.

The scale of this deception is accelerating alongside increasingly accessible AI tools. Sony’s identified 135,000 fraudulent tracks likely represent merely a fraction of the total infiltration across streaming services, with 60,000 detected since March 2025 alone.

This revelation emerged during Wednesday’s launch of the IFPI’s Global Music Report in London, which highlighted the industry’s paradoxical success amid technological threats. Recorded music revenues grew 6.4% in 2025 to $31.7 billion—marking the eleventh consecutive year of growth largely driven by streaming subscriptions. Taylor Swift dominated as the year’s top artist with her album ‘The Life Of A Showgirl’, while structural market shifts saw China surpass Germany as the world’s fourth-largest music market.

The event coincided with the UK government’s pivotal decision to abandon plans allowing AI firms to train algorithms on copyrighted material without permission—a move welcomed by IFPI CEO Victoria Oakley as evidence of governments “grappling with squaring creativity protection with innovation encouragement.”

Beyond deepfakes, the industry confronts streaming manipulation schemes where artificially boosted play counts divert royalties from legitimate artists. IFPI estimates up to 10% of streaming content may be fraudulent, with AI technology having “supercharged” these practices.

Oakley urged streaming platforms to implement AI-detection tools, citing French service Deezer’s existing system that identifies 34% of submissions as AI-generated. Kooker emphasized that transparency in content origins is essential: “Without proper identification, fans can’t distinguish genuine human creativity from unauthorized AI content, undermining trust and user experience.”