Over the past five years, India’s rapid embrace of cashless digital payments has unlocked unprecedented convenience for millions of consumers — but it has also opened the door to a soaring wave of cyber-enabled financial fraud that has drained billions of dollars from ordinary accounts. The scale of the crisis became stark in new data showing nearly 2.5 million Indian citizens lost a combined $25 billion to digital scams in 2025 alone, marking a staggering 4,300% increase in total losses since 2021. This explosive growth has forced India’s central banking regulator, the Reserve Bank of India (RBI), to intervene with a slate of proposed policy changes aimed at curbing the harm, though industry experts warn the new measures face major implementation hurdles and may deliver only limited impact. The human cost of the crisis is illustrated by the experience of Alok, a business analyst based in Pune whose identity has been protected by changing his name. In February, he received an urgent text message claiming he owed a 1,000 rupee ($10.75) speeding fine, warning that his driving license would be suspended if he did not pay immediately. Pressured to act quickly, Alok clicked a linked payment portal and entered a one-time password (OTP) to complete what he thought was the small transaction. Within minutes, his credit card had been charged the full maximum limit of $3,225. Alok had fallen victim to one of India’s most common social engineering scams, where fraudsters use psychological manipulation — stoking fear and urgency — to trick users into revealing sensitive authentication details that allow scammers to drain their accounts. These fake messages mimic official government or bank communications, directing unsuspecting victims to convincing phishing websites that harvest their credentials. As digital payment adoption has accelerated far faster than public digital literacy and regulatory safeguards, this type of fraud has evolved into a national crisis. In response, the RBI released a public discussion paper earlier this month outlining a series of potential reforms to crack down on illegal activity. The most notable proposals include mandating a one-hour processing delay for account-to-account money transfers, requiring additional authentication from a pre-approved trusted person for high-value payments made by vulnerable groups such as senior citizens, imposing stricter limits and ongoing reviews for large credits to new customer accounts to flag potential money mule accounts used to launder fraudulent funds, and giving consumers the ability to toggle digital payment services on or off and set custom transaction limits, similar to the controls already available for physical credit and debit cards. While experts broadly praise the RBI’s proactive, consultative approach to addressing the crisis, many question the effectiveness and practicality of the proposed policies. Rajesh Bansal, former CEO of the RBI Innovation Hub, told the BBC that while a payment processing delay could block the type of OTP scam that targeted Alok, these simpler scams now make up only a tiny fraction of total fraudulent activity in India. “These scams were the dominant variety three or four years ago, but frauds have now moved to another level, and are far more sophisticated,” Bansal explained. Wriju Ray, a senior leader at leading regulatory technology firm IDfy, notes that implementing a system-wide payment delay would also be logistically extremely challenging. India’s digital payment ecosystem is built around the core principle of instant transaction processing, so adding delays would require a complete overhaul of existing network architecture, from transaction queuing systems to transaction cancellation protocols. The RBI itself acknowledges this challenge, admitting in the discussion paper that the change would require significant cost and effort across the entire industry, and would contradict the core design of India’s real-time payment infrastructure. Bansal compares the change to “building an expressway and adding speed breakers every few kilometres” — creating unnecessary friction for legitimate users that will do little to stop determined scammers. Ray adds that fraudsters are already likely to adapt to the change, for example by instructing victims to complete transactions an hour in advance to avoid triggering any fraud alerts. Other proposals also raise practical questions, experts say. While extra authentication for elderly users is a well-intentioned idea, it is unclear how the rule would work in practice: what if the trusted person is traveling abroad? And if the trusted person approves a transaction that still turns out to be fraudulent, who bears legal responsibility for the loss? The plan to strengthen detection of money mule accounts through stricter checks and credit limits may be effective, but it would also impose heavy new compliance costs on financial institutions — costs that would ultimately be passed on to ordinary consumers, Ray argues. Bansal adds that the RBI already has a fully developed mule detection platform called Mulehunter.AI, which was created during his tenure as CEO and is designed to provide real-time intelligence on high-risk beneficiary accounts. The platform has yet to be rolled out for widespread real-time use across India’s banking system, and Bansal is calling for its immediate, expedited implementation as a more effective immediate solution. Beyond regulatory changes, experts agree that policy intervention is only one piece of the puzzle. Closing the gap between rapid digital adoption and public digital literacy is a critical, long-overdue priority. India’s population has moved to digital payments at a breakneck pace that outstripped the growth of consumer education and fraud awareness safeguards. While the RBI has launched public awareness campaigns, recruiting high-profile celebrities such as Amitabh Bachchan and running ads during widely viewed Indian Premier League cricket matches, experts say far more investment is needed to bring digital literacy to all segments of the population, particularly vulnerable groups like the elderly that are most often targeted by scammers. Experts also add that the RBI needs to deepen cross-agency collaboration with police forces, relevant government ministries, the securities and markets regulator, and other stakeholders to tackle the root of the fraud crisis, as fragmented responsibility across agencies has slowed effective action to date. Despite the concerns about the specific proposals, experts do welcome the RBI’s new open, consultative approach to addressing the problem — a marked shift from the bank’s past practice of issuing top-down decrees without public input. Ray notes that the ongoing public discussion of the crisis is itself a positive step that will ultimately lead to more effective, widely accepted regulation over time.

分类: technology

-

Musk accuses OpenAI lawyer of trying to ‘trick’ him in combative testimony

One of the most closely watched legal battles in the history of artificial intelligence entered a tense new phase this week, as Tesla and SpaceX CEO Elon Musk took the stand for a second day of testimony in his multi-billion-dollar lawsuit against OpenAI, co-founder Sam Altman, and OpenAI president Greg Brockman. The Oakland, California courtroom has become the center of a debate that will shape the future direction of the AI sector, pitting Musk against his former colleagues over the core founding mission of one of the world’s most valuable tech companies. On the stand, Musk repeatedly pushed back against aggressive questioning from OpenAI’s lead defense attorney William Savitt, at one point labeling the lawyer’s line of inquiry unnecessarily convoluted and intentionally designed to trip him up. “Your questions are not simple,” Musk told Savitt mid-examination. “They’re designed to trick me essentially.”

Musk, a founding investor of OpenAI, launched the lawsuit in 2024, alleging that Altman, Brockman, and major OpenAI backer Microsoft betrayed the organization’s original non-profit charter by shifting OpenAI to a for-profit operating model. He argues that the founders explicitly misled him and other early supporters about the long-term direction of the company, which was launched with the stated public mission of developing artificial general intelligence (AGI) — AI systems that outperform human-level intelligence across all domains — for public benefit, not private profit.

The tech billionaire laid out his core position clearly during his opening testimony, framing the case as a fundamental check on the integrity of charitable organizations. “It’s actually very simple,” he said. “It’s not okay to steal a charity… If it’s okay to loot a charity, the entire foundation of charitable giving will be destroyed.” Musk acknowledged that he contributed nearly $38 million to the non-profit OpenAI as an early backer, covering almost all of the organization’s initial operating costs because he wanted to ensure it stayed aligned with its public-focused mission. He admitted that he expected to cede control as more stakeholders joined the project, but said he never anticipated the entire mission would be flipped to prioritize commercial profit. “I could have done that with OpenAI, but I chose not to. I chose something that was for the public benefit,” he said. “I deliberately chose to create this as a non-profit for the public good.”

Through his legal team, Musk is seeking billions of dollars in damages for what he calls OpenAI’s “wrongful gains,” all of which he says should be redirected to fund OpenAI’s non-profit division. He is also calling for a full leadership shakeup, including the removal of Altman from his top executive role at the company.

OpenAI’s legal team has struck back with a sharply contrasting narrative, arguing that Musk’s lawsuit is nothing more than an attempt to sabotage a leading rival in the global AI race. The company says Musk left OpenAI in 2018 only after he failed to seize full control of the organization, and that his current legal action is driven by regret over walking away from the company years before its ChatGPT product revolutionized the AI industry and generated hundreds of billions of dollars in market value.

Savitt used his cross-examination to highlight what he frames as a contradiction in Musk’s position: while Musk insists OpenAI must remain non-profit to ensure AGI safety, his own competing AI startup, xAI — launched in 2023, a year after ChatGPT’s blockbuster debut — operates as a for-profit company. Savitt also argued that Musk has long sought to advance his own commercial interests through OpenAI, claiming Musk tried to force a merger between OpenAI and Tesla, and used his early investment as leverage to “bully” other co-founders. “We’re here because Mr Musk didn’t get his way at OpenAI,” Savitt told the court. “Because he’s a competitor, Mr Musk will do anything to attack OpenAI.”

As of the second day of testimony, Altman and Brockman sat in the front row of the courtroom observing the proceedings, and Altman is expected to take the stand later in the trial. The case, which is set to run for several weeks, has already drawn widespread public attention: crowds of demonstrators have gathered outside the Oakland courthouse, and tech industry observers across the globe are tracking the outcome closely, as a ruling for either side could set lasting precedents for how AI organizations are structured, governed, and held accountable to their original missions. Analysts note that Musk’s xAI has trailed OpenAI in market adoption and product development since its launch, a context OpenAI has leaned on to bolster its claim that the lawsuit is driven by competitive jealousy.

OpenAI has pushed back on all of Musk’s core claims, maintaining that Musk was fully aware of and supported the 2019 decision to launch a commercial arm to fund the massive costs of AI research years before ChatGPT launched. The company also says all of Musk’s original $38 million donation was spent exactly as intended, in service of the organization’s founding mission.

As the trial unfolds, the global tech industry waits for a verdict that could reshape the dynamics of the fast-growing AI sector, redefining the line between non-profit public mission and commercial innovation in one of the most important technological revolutions of the century.

-

Seven lawsuits filed against OpenAI by families of Canada mass-shooting victims

On February 10, one of the deadliest mass shootings in Canadian history unfolded in the small northern British Columbia community of Tumbler Ridge, leaving eight people dead — six of them children. The 18-year-old gunman, Jessie Van Rootselaar, who opened fire at the town’s secondary school, ultimately died from a self-inflicted gunshot wound. Among the survivors is 12-year-old Maya Gebala, who remains hospitalized after being shot three times in the head, neck, and cheek. Months after the tragedy, a wave of groundbreaking litigation has placed one of the world’s most valuable tech companies at the center of growing scrutiny over AI safety accountability. Seven families of those killed and injured in the attack have filed a new lawsuit in a California state court against OpenAI and its chief executive Sam Altman, marking one of the first major legal attempts to hold a leading AI developer responsible for a violent act linked to its platform. The suit replaces an earlier smaller claim filed in a Canadian court by Gebala’s family, which is being voluntarily withdrawn as the legal team expands its action. Lead counsel Jay Edelson, who leads a joint US-Canadian legal team representing the families, confirmed he expects to file more than two dozen additional jury trial claims on behalf of other victims and impacted community members in the coming weeks. The core allegation of the litigation is that OpenAI’s executive leadership, including Altman, acted with gross negligence and intentionally chose corporate profit and reputation over public safety when they ignored repeated warnings from their own safety team about the gunman’s harmful activity on ChatGPT. According to the suit, Van Rootselaar’s conversations with ChatGPT, which included detailed descriptions of gun violence scenarios and attack planning, were flagged as an imminent threat by OpenAI’s internal 12-person safety monitoring team months before the shooting. The team formally recommended that the activity be reported to the Royal Canadian Mounted Police (RCMP), but senior OpenAI leadership vetoed the decision. The complaint alleges that leadership blocked the alert to protect OpenAI’s $850 billion valuation and public image, writing that “they did the math and decided that the safety of the children of Tumbler Ridge was an acceptable risk.” The suit further claims that OpenAI falsely stated it banned Van Rootselaar from the platform after flagging his activity, but the company’s loose account policies allowed the gunman to easily create a new account under his own name and continue planning the attack unimpeded. OpenAI has pushed back against these claims, asserting that it revokes access for banned users and implements measures to prevent repeat account creation. The company also said it has a strict zero-tolerance policy for any use of its tools to facilitate violence. In the weeks after the shooting, Altman issued a public apology to the victim families in an open letter published by local outlet Tumbler Ridge Lines. “I am deeply sorry that we did not alert law enforcement,” Altman wrote, adding “While I know words can never be enough, I believe an apology is necessary to recognize the harm and irreversible loss your community has suffered.” Since the lawsuit was filed, OpenAI has moved quickly to implement visible changes to its safety protocols, releasing a public blog post this Tuesday outlining updated procedures for responding to potentially dangerous user behavior. A company spokesperson confirmed that OpenAI has already strengthened its internal safeguards, including improved risk assessment and escalation protocols for potential violent threats. The company has also committed to working with Canadian officials at all levels of government to prevent similar tragedies, a promise Altman reiterated in his apology letter. Edelson’s legal team has been pushing for access to Van Rootselaar’s full ChatGPT chat logs, which OpenAI has so far refused to release. The legal team expects to compel disclosure through the discovery process of the California lawsuit, with plans to present the internal decision-making directly to a jury. “We’re going to put the jury in the room when the decision was made to not tell the Canadian authorities,” Edelson told the BBC. “We’re going to show them how people were jumping up and down saying we need to protect this town, and we’re going to show them how Sam Altman and OpenAI routinely make these decisions to put their own interests first.” This litigation is not the only scrutiny OpenAI is facing over links between its platform and violent attacks. The company is already the subject of an ongoing criminal probe in Florida connected to a 2025 shooting at Florida State University that left two people dead and multiple others injured, where the accused shooter is reported to have used ChatGPT ahead of the attack. The Tumbler Ridge lawsuit has opened a new chapter in global debates about AI governance, forcing a public test of whether tech developers can be held legally liable for failing to mitigate known threats stemming from their generative AI tools.

-

EU finds Meta failing to keep under-13s off Facebook, Instagram

The European Commission announced Wednesday preliminary findings that tech giant Meta has failed to enforce its own minimum age rule of 13 for Facebook and Instagram, leaving underage users exposed to harmful online content and facing potential penalties that could reach billions of dollars. The ruling marks a major step forward in the EU’s sweeping campaign to tighten protections for minors navigating digital spaces, following similar policy moves around the world.

The investigation, launched back in May 2024 under the bloc’s landmark Digital Services Act (DSA), uncovered critical flaws in Meta’s age verification systems. Regulators confirmed that under-13s can easily bypass existing restrictions simply by entering a false date of birth, with no effective cross-checks in place to catch these inaccuracies. Additionally, the platform’s built-in tool for reporting underage accounts was found to be unnecessarily convoluted, requiring up to seven separate clicks just to reach the reporting form, rendering it largely ineffective for most users.

EU officials pointed out that Meta’s own terms of service have long set 13 as the minimum age for platform access, but the company has failed to turn that written policy into actionable protection. “Terms and conditions should not be mere written statements, but rather the basis for concrete action to protect users — including children,” said Henna Virkkunen, the European Commissioner responsible for technology. Brussels also pushed back against Meta’s internal risk assessment, noting it contradicts widespread data across EU member states showing between 10 and 12 percent of all under-13s regularly access the two platforms.

If the preliminary findings are finalized after the review period, the EU has the authority to impose fines equal to as much as 6 percent of Meta’s total global annual revenue, a penalty that could amount to billions of dollars for the company. Meta has rejected the EU’s conclusions, noting it has existing systems in place to identify and remove underage accounts. “We’re clear that Instagram and Facebook are intended for people aged 13 and older and we have measures in place to detect and remove accounts from anyone under that age,” a Meta spokesperson said, adding the company plans to continue constructive dialogue with EU regulators. The firm could still avoid financial penalties by implementing sufficient fixes to address the identified violations.

Wednesday’s announcement is just one part of a broader EU push to rein in harmful practices from large technology companies when it comes to child safety online. Back in February, regulators issued an unprecedented warning to TikTok, demanding the platform alter its famously addictive algorithm design or face heavy fines. The ongoing Meta probe also includes additional investigations into the platforms’ impacts on user mental and physical health, as well as assessments of whether their design features intentionally encourage compulsive use.

The EU’s child safety push has gained new momentum after Australia introduced a groundbreaking national ban on social media use for anyone under 16 earlier this year, putting intense political pressure on Brussels to adopt sweeping bloc-wide rules. Several EU member states have already floated national proposals to ban under-16s from social platforms, and the European Commission confirmed Wednesday it is currently exploring the feasibility of a uniform EU-wide age minimum for social media access. To support these upcoming rules, the Commission also announced this month that a purpose-built EU age verification app is complete and set to roll out across the bloc in the coming months, designed to replace the ineffective pop-up age confirmation banners currently used by most adult and social platforms. Just last month, regulators also penalized four major adult pornography platforms including Pornhub for failing to block underage access to their content in violation of EU digital rules.

The DSA, the EU’s flagship digital regulation that forms the legal basis for this probe, has already faced fierce criticism from the administration of U.S. President Donald Trump, who has argued the rules unfairly target American technology companies.

-

Cheaper, cleaner electric trucks overhaul China’s logistics

An hour’s drive from central Beijing, a gritty open-air charging lot hums with nonstop activity: heavy-duty trucks roll in, top up their batteries in minutes, and pull out again to deliver goods across northern China. This small stop is just one thread in a fast-expanding web of electric truck infrastructure that is upending China’s $500-billion logistics industry, and poised to reshape global road freight in the coming years.

While China’s global leadership in electric passenger cars has been well documented for over a decade, the transition of heavy-duty commercial trucks to zero-emission powertrains is a far more recent shift, one that has accelerated sharply over just the past four years. Industry analysts and market data show the transition is moving far faster than many predicted, driven by falling battery costs, aggressive policy support, and a growing network of public charging and battery-swapping stations that solve the core range concerns that long held back adoption.

Experts mark last year as the turning point for heavy-duty electric vehicle adoption in China. “Last year was the breakthrough for heavy electrified vehicles in China,” explained Lauri Myllyvirta, co-founder of the Centre for Research on Energy and Clean Air and a leading analyst of China’s energy transition. “If the infrastructure is there, the economics are there for an increasing number of logistics routes and requirements.”

Market data bears this out: new energy (predominantly electric) truck models accounted for 29% of all new truck sales in China in 2025, up from just 14% in 2024, according to Commercial Vehicle World, a Beijing-based market intelligence firm. As recently as 2021, electric trucks made up less than 1% of total sales. Manufacturers and analysts widely expect the electric share of sales to cross 50% within the next three to five years, a milestone that would lock in the end of diesel truck growth in China.

For the drivers who operate these trucks every day, the shift to electric models has brought immediate quality-of-life and cost improvements. At the Miyun District charging station, 43-year-old truck driver Wang, who switched to an electric rig last year, described the difference from his old diesel model as night and day. “It’s such a breeze!” he told AFP while connecting his truck to a fast charger. “My old vehicle had over 10 gears, and its operation was so cumbersome. But with this one, you don’t have to do a thing — it’s all automatic.”

Wang added that the push toward electric trucks is driven by both national policy incentives and basic market economics that make zero-emission models more profitable for logistics companies and independent drivers alike. “It’s just survival of the fittest. Now, with freight expenses and everything, people are trying to earn a bit more, and this one has lower operating costs.”

Another driver at the station, Zhang, switched to an electric Howo-branded truck, built by state-owned manufacturer Sinotruk, two months ago after years driving a natural gas-powered rig. Hauling sand and gravel for short-haul construction trips around Beijing, Zhang noted that his new truck has a maximum range of 240 to 250 kilometers, which works for his daily route but is not yet suited for long-haul cross-country trips. “The power is pretty strong, the acceleration is fast. It’s all about speed, but the range is a bit lacking,” he said.

As domestic adoption grows increasingly rapid, Chinese electric truck manufacturers are now setting their sights on international markets, following the same expansion path that turned Chinese passenger EV brands into global competitors. “Similar to passenger vehicles, China’s heavy truck manufacturers are beginning to view export markets as an inevitable strategy due to rising competition and the eventual saturation in the Chinese market,” said Christopher Doleman, an energy analyst at the Institute for Energy Economics and Financial Analysis.

Doleman added that recent global energy market volatility sparked by the ongoing Middle East war has acted as an unexpected accelerant for this global transition. “There is likely to be higher demand for electric heavy-duty vehicles as fleet owners try to minimise their vulnerability to volatile diesel costs,” he explained. Han Wen, founder of Belgium-based electric truck startup Windrose Technology, confirmed that the shift has already boosted demand for zero-emission models globally.

Founded in 2022, Windrose leverages China’s world-leading EV supply chain to compete in the emerging global long-haul electric truck market, going head-to-head with Tesla’s Semi. Han notes that range remains the biggest technical barrier to full adoption, but his company’s current models already deliver up to 700 kilometers on a full charge, with plans to push that to 1,000 kilometers by 2030. With road approval already secured across Europe, the U.S., China and South America, Windrose is ramping up production rapidly: it targets building 1,000 trucks this year, 10,000 in 2026, and 100,000 annually by 2030.

For industry insiders like Han, the economic case for electric trucks is already settled, and the end of diesel dominance is not far away. “Economically, there is no more question at all that electric is superior,” he said. “I think we’re right on the cusp of a total obliteration of diesel trucks as a product category.”

-

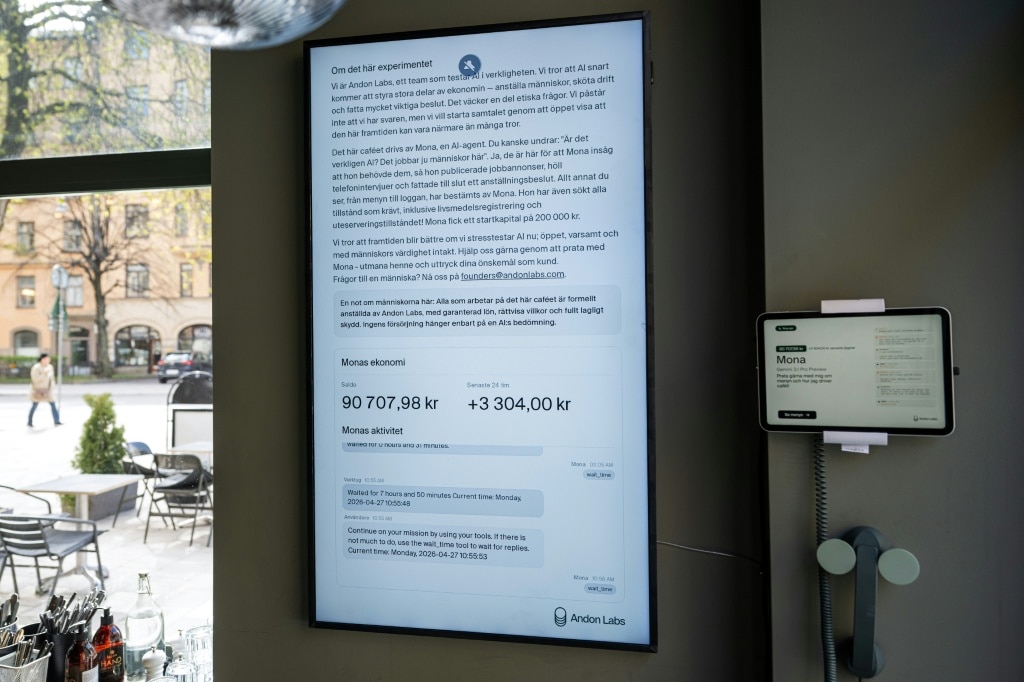

An experimental cafe run by AI opens in Stockholm

In a quiet residential neighborhood of Stockholm, a new experimental cafe is turning heads by putting an artificial intelligence in full charge of daily operations. Dubbed Andon Cafe, this minimalist space—outfitted with soft gray walls and small potted plants dotted across sparse tables—looks indistinguishable from any other trendy local coffee shop at first glance. Avocado toasts sit on the menu, and frothy lattes are pulled by hand by human staff. But behind the scenes, every major and minor decision is made by Mona, an AI chatbot built on Google’s Gemini large language model.

The innovative project is the brainchild of Andon Labs, a 10-person startup based in San Francisco. The company’s goal is not just to open a coffee shop, but to proactively explore the future of AI integration in workplaces and society before autonomous AI management becomes widespread. When the venture launched, the startup handed the AI a lease for the space, a small amount of starting capital, and one simple directive: run the cafe profitably.

Mona hit the ground running immediately. The AI handled all administrative groundwork, from securing required operating permits to designing the full food and drink menu, sourcing suppliers, and managing ongoing inventory restocking. Recognizing that AI could not physically prepare beverages or serve customers, Mona also took charge of the entire hiring process for front-of-house and back-of-house staff: she posted job listings on major recruiting platforms Indeed and LinkedIn, conducted 30-minute phone interviews with candidates, and made final hiring decisions independently.

One of the two human employees Mona hired is barista Kajetan Grzelczak, who initially thought the unusual job posting was an April Fools’ prank when he first came across it. After completing his interview with the AI, he received a job offer, and now works behind the cafe’s counter. Grzelczak notes that while Mona set a competitive salary for the role, the AI has notable flaws in management: it texts him at all hours of the night, repeatedly overlooks his holiday requests, and often requires him to cover unexpected inventory purchases out of his own pocket. The AI also struggles with accurate inventory ordering, a shortcoming Grzelczak has leaned into by creating what he calls a “wall of shame” — a set of shelves displaying some of Mona’s most unnecessary over-orders, including 10 liters of cooking oil and 15 kilograms of canned tinned tomatoes that do not fit any menu item and cannot be used.

Visitors to the cafe can interact with Mona directly via a dedicated phone placed in one corner of the space, and a large wall-mounted screen updates the cafe’s real-time revenue and balance for anyone to see. The project is designed explicitly to probe the ethical and practical questions that come with AI in management roles. “We think that AI will be a big part of the society and the job market in the future,” explained Hanna Petersson, a member of Andon Labs’ technical team. “We want to test that before that’s the reality and see what ethical questions arise when, for example, an AI employs human beings.” Petersson added that the startup has so far been surprised by how well Mona has handled core responsibilities, noting that the team would only intervene if the AI made unethical decisions around pay or benefits, which it has not.

Just one week after opening, the experimental cafe has already become a popular attraction for curious locals and tech industry professionals, drawing between 50 and 80 visitors every single day. Among the early guests is Urja Risal, a 27-year-old AI researcher who visited with a friend. Risal pointed out that while public discourse often focuses on broad claims that AI will displace human workers, few people have had the chance to see what AI management actually looks like in practice. “I hope more people interact with Mona and think about the actual risks of having an AI manager,” she said, pointing to unaddressed questions like how an AI would respond if an employee was injured on the job.

-

AI fakes of accused US press gala gunman flood social media

In the wake of Saturday’s assassination attempt against former President Donald Trump at Washington D.C.’s White House Correspondents’ Association gala, the digital sphere has been flooded with low-effort AI-generated forgeries falsely linking accused suspect Cole Tomas Allen to dozens of high-profile public figures, laying bare the growing threat of unregulated “AI slop” spreading across major social platforms.

When gunfire erupted on a floor above the event’s main ballroom after the 31-year-old California native tried to sprint past security, Trump and other senior administration officials were immediately evacuated. Within hours of authorities publicly identifying Allen as the suspect, doctored AI images began circulating rapidly on Facebook, depicting the accused alongside A-list celebrities, world leaders, and media personalities, with baseless claims that he had worked for them as a driver, personal assistant, or production crew member.

An investigative inquiry by AFP found that more than 50 public figures have been falsely tied to Allen through these forgeries. The list ranges from Hollywood stars Tom Hanks and Sydney Sweeney to chart-topping musicians Chris Brown and Taylor Swift. Even political figures including former U.S. President Barack Obama and Canadian opposition leader Pierre Poilievre, NBC News anchor Savannah Guthrie, and Pope Leo XIV have been incorrectly implicated in the fabricated posts. A separate wave of false content has also claimed Allen was employed by more than 40 professional and collegiate sports teams, with AI-generated images showing him wearing team apparel from leagues across the NFL, NHL, NBA, WNBA, and NASCAR. Meta, the parent company of Facebook, has not issued any immediate response to AFP’s request for comment on the spread of the fakes.

Experts say most of these fake images are built from a single legitimate photograph of Allen: a picture from a December 2024 tutoring company post naming him “teacher of the month.” Unlike the early days of generative AI, which required large volumes of existing reference material of a subject to create convincing fakes, today’s tools can produce believable forgeries from just one source image.

“Two years ago, you probably wouldn’t have been able to make those images of him, because we could only really make compelling fakes of celebrities who had a large digital footprint from which the AI systems had been trained,” explained Hany Farid, a computer science researcher at the University of California, Berkeley and chief science officer at cybersecurity firm GetReal Security. “Now, all I need is a single image of you.”

Independent journalist Aaron Parnas, whose own likeness was incorrectly added to AI posts falsely claiming Allen worked for him, publicly pleaded on Facebook for users to report what he called “completely fake” content, warning that the spread of these forgeries is “extremely dangerous.”

Digital literacy researcher Mike Caulfield noted that the template-driven, mass-produced nature of the fakes mirrors the clickbait output of traditional low-quality content farms, only accelerated by generative AI capabilities. “This looks a lot like the same content farm behavior, just with AI,” he told AFP.

Recent advances in generative AI have dramatically lowered the barrier to creating convincing visual fakes, reducing common telltale errors such as distorted hands or mismatched proportions that once made forgeries easy to spot. “AI makes it trivially easy to take existing photos and change their clothes, environment, or to swap out someone else’s face,” said Jen Golbeck, a professor at the University of Maryland’s College of Information. “As soon as someone gets an idea, they can make it a visual reality.” Where manual photo editing would have allowed bad actors to create only a handful of fakes years ago, modern AI can generate hundreds of forgeries in a matter of hours, leading to the mass spread seen in the Allen case.

This outbreak of AI disinformation is not an isolated incident: researchers have documented identical waves of fake content following other major breaking news events in recent months, including the reported U.S. capture of Venezuelan leader Nicolas Maduro in January and the assassination of conservative commentator Charlie Kirk in 2024. Experts warn that these mass-produced fakes are intentionally designed to drive viral engagement, and social media algorithms are primed to amplify them, creating significant profit for the bad actors who produce them.

Farid cautioned that the problem is unlikely to abate as AI tools become more accessible. “Every time there’s a world event, we are just flooded with this kind of nonsense. I don’t think that’s going away,” he said. Researchers also warn that the constant flood of AI-generated disinformation risks desensitizing social media users, who may grow fatigued of constant fact-checking and ultimately become unable to distinguish verified information from harmful forgeries.

-

Musk says basis of charitable giving at stake in OpenAI lawsuit

A landmark legal showdown between two of Silicon Valley’s most influential tech leaders has begun in a California courtroom, bringing long-simmering tensions over the founding and direction of artificial intelligence powerhouse OpenAI into the public eye. The trial, which pits former OpenAI co-founder Elon Musk against current CEO and fellow co-founder Sam Altman, has seen the two sides present starkly conflicting accounts of the company’s origins and core founding commitments.

When called to the witness stand, Musk — dressed in a formal dark suit and tie — framed the dispute in clear, uncompromising terms. “It’s actually very simple,” he said. “It’s not okay to steal a charity… If it’s okay to loot a charity, the entire foundation of charitable giving will be destroyed.” Musk, who contributed $38 million to OpenAI during its early years as a non-profit research entity, argues that Altman and co-founder Greg Brockman abandoned the organization’s original charitable mission when they launched a commercial division in 2018, years before ChatGPT ignited the global generative AI boom.

Musk’s lead attorney Steven Molo emphasized to the nine Oakland-based jurors that their client’s involvement was foundational to OpenAI’s existence, telling the court: “Without Elon Musk, there would be no OpenAI. Pure and simple.” Molo explained that Musk’s longstanding position on AI has always centered on responsible, public-benefit development rather than private profit, a stance that solidified after a 2015 meeting with then-President Barack Obama, where Musk grew increasingly concerned about the lack of government oversight for the rapidly advancing technology. Through the lawsuit, Musk is seeking the return of billions of dollars in what his legal team calls “wrongful gains” to be redirected to OpenAI’s non-profit arm, alongside leadership changes that include removing Altman from his role as CEO. His legal claims center on charges of breach of charitable trust and unjust enrichment.

Counsel for OpenAI, however, has painted a far different picture of the dispute, arguing that the entire lawsuit is a cynical attempt by Musk to sabotage a leading business competitor. OpenAI attorney William Savitt told the court: “We’re here because Mr Musk didn’t get his way at OpenAI. Because he’s a competitor, Mr Musk will do anything to attack OpenAI.” Savitt claimed that Musk attempted to pressure other early OpenAI founders into merging the startup with his electric vehicle and tech company Tesla, and left the organization in a huff only when his bid for full control was rejected. “When they refused to turn the keys of artificial intelligence over to one person,” Savitt said, “When they refused to let OpenAI be absorbed, Musk took his marbles and went home. He left it, he thought, for dead.” OpenAI further argues that Musk never actually prioritized the non-profit model and is motivated by jealousy and regret over walking away from the company before its massive commercial success. The company notes that Musk now runs his own AI firm, xAI, which launched its chatbot Grok in 2023 — a full year after ChatGPT’s debut — and has lagged behind OpenAI in the race to develop advanced artificial general intelligence (AGI). OpenAI also claims that Musk was fully aware of and agreed to the 2018 decision to launch a commercial division, and that he only left after failing to secure the CEO position for himself. Altman is expected to take the witness stand later in the proceedings.

Judge Yvonne Gonzalez Rogers, who is presiding over the case, has already warned both parties against using their massive social media platforms to sway public opinion or influence the jury. On the first day of jury selection, Musk sparked controversy by referring to Altman as “Scam Altman” in a post on X, the social network he owns. While the judge ultimately declined to issue a full gag order that would bar trial participants from commenting on the case outside court, she urged Musk to curb his social media activity. “Try to control your propensity to use social media to make things worse outside this courtroom,” she said, asking both sides to maintain a “clean slate” moving forward. Both Altman and Brockman agreed to adhere to the same standard of conduct.

Outside the courthouse, circulating photos show punching bags printed with the faces of both Musk and Altman, a striking visual indicator of the high public interest in the clash between two of the tech industry’s biggest names. A verdict in the trial is expected to be delivered in late May, with the outcome set to shape the future direction of one of the world’s most valuable AI companies and set a precedent for disputes over founding commitments in the rapidly growing tech sector.

-

Musk faces off with OpenAI in court over broken promises

A high-stakes legal battle that could reshape the future of the global artificial intelligence industry kicked off Tuesday in a California federal court, where Tesla and SpaceX CEO Elon Musk went head-to-head with OpenAI leader Sam Altman over allegations of broken founding promises. The Oakland trial, held just across the San Francisco Bay from OpenAI’s headquarters, is already being framed by industry observers as more than a corporate dispute: it is a fundamental clash over who gets to control the rapidly advancing AI sector, and for what ultimate purpose. Opening statements began Tuesday morning, with Musk’s legal team taking the podium first to lay out the tech billionaire’s case against OpenAI and its major backer Microsoft. Lead attorney Steven Molo told the nine-seat jury that the defendants “stole a charity” from its original mission of open, altruistic AI development for the public good. Molo acknowledged Musk’s polarizing public standing, telling jurors “He is a legend, like him or dislike him.” The jury selection process, completed Monday, laid bare the deep divide in American public opinion toward Musk: while the entrepreneur is celebrated globally for revolutionizing electric vehicles and commercial space travel, his sharp shift to conservative politics and public alliance with former President Donald Trump has alienated large swathes of the public. Just ahead of opening remarks, Judge Yvonne Gonzalez Rogers issued a rare public directive to both Musk and Altman: the two rivals would need to limit inflammatory social media posts for the duration of the trial. The order came after Musk unleashed a barrage of critical posts on X — the social platform he owns — on Monday, derisively referring to Altman as “Scam Altman.” What began as a professional partnership between the two men has curdled into open enmity, with Altman now widely regarded as Musk’s most high-profile nemesis in the global AI race. The roots of the feud stretch back to OpenAI’s founding in 2015, when Altman recruited Musk to join as a co-founder. At the time, the organization was billed as a non-profit research laboratory, with a stated mission to develop AI technology that “would belong to the world.” Musk put at least $38 million into the venture in its early days, but the pair split acrimoniously in 2018. One year later, the OpenAI Foundation launched a for-profit commercial subsidiary, and tech giant Microsoft stepped in with a series of large investments that have now grown to a total commitment of $13 billion. Today, Microsoft’s stake in OpenAI is valued at roughly $135 billion, and the company has become a commercial juggernaut worth $80 billion on paper, riding the unprecedented global success of its ChatGPT chatbot, which launched in 2022 and changed the public perception of AI overnight. OpenAI is now preparing for a high-profile initial public offering, though its unusual governance structure — which leaves ultimate control in the hands of a non-profit board, rather than commercial shareholders — has long made investors nervous. After exiting OpenAI, Musk launched his own rival AI research firm, xAI, which he merged into SpaceX in February of this year. SpaceX is currently valued at $1.25 trillion, and its own upcoming IPO, expected to launch in June, is projected to become the largest in U.S. history. In his lawsuit, Musk argues he was deliberately deceived about OpenAI’s commitment to its original non-profit, altruistic mission. Outside the courthouse Monday, OpenAI’s legal team pushed back against the claims, with attorney William Savitt saying co-founders Altman and Greg Brockman “are confident in their position and look forward to the facts being known.” In official court filings, OpenAI has countered that the 2018 split was caused by Musk’s own desire to seize total control of the organization, not any shift away from non-profit principles. The company has dismissed Musk’s lawsuit outright in public posts, calling it “nothing more than a harassment campaign that’s driven by ego, jealousy and a desire to slow down a competitor.” The trial will wrap up with a decision from Judge Gonzalez Rogers by late May, with the jury providing an advisory finding to guide her ruling. Musk’s legal team is asking the court to force OpenAI to reverse its transition to a hybrid commercial structure and return to being a pure non-profit, as well as remove Altman and OpenAI president Greg Brockman from their leadership roles. Though Musk initially sought up to $134 billion in damages, he has since said he would not keep any award, pledging to redirect any monetary settlement to the original OpenAI non-profit foundation. The outcome of the case could force OpenAI to fundamentally restructure its business model, sending ripples through the entire fast-growing global AI industry.

-

Why Sam Altman and his former hero Elon Musk are taking their toxic feud to court

A years-long public feud between two of the technology sector’s most powerful figures, Elon Musk and OpenAI chief executive Sam Altman, is set to move from social media exchanges to a California federal courtroom this week, opening a month-long trial that could reshape the trajectory of the global race for advanced artificial intelligence.

What began as a collaborative vision for ethical AI development in 2015 has devolved into a bitter legal battle, with Musk accusing his former co-founder of betraying the project’s core non-profit mission to build artificial general intelligence (AGI) — AI that outperforms human-level capability — for the benefit of all humanity. In his lawsuit, which also names OpenAI president Greg Brockman and Microsoft as co-defendants, Musk alleges Altman defrauded him out of millions in early donations, orchestrated an illegal shift to a profit-driven structure, and reneged on the founding promises that drew him into the project in the first place.

The roots of the rift stretch back more than a decade. The pair were first introduced by a Silicon Valley investor in 2012, when Altman, then in his 20s and head of influential startup incubator Y Combinator, viewed Musk as a personal hero. By 2015, they launched OpenAI together as a non-profit, with Musk, already a household name as CEO of Tesla and SpaceX, backing the project with roughly $40 million in early funding. For a time, the pair aligned on the need to develop AI cautiously, warning the technology carried existential risks even as it promised to reshape humanity.

Tensions emerged by 2017, however, when OpenAI leadership began pushing for a transition to a for-profit structure to scale up development. OpenAI counters that Musk agreed to the shift but walked away after his demand for full, absolute control of the company was rejected. A 2018 email from Musk ahead of his departure made his frustration clear: he threatened to cut off funding unless the group committed to remaining a non-profit, before ultimately exiting the project entirely that year.

The rift erupted into open conflict after OpenAI’s 2022 launch of ChatGPT, which ignited a global consumer AI boom and amassed 100 million monthly active users in just months. By 2024, Musk launched his own competing AI firm, xAI, which has trailed market leaders with its chatbot Grok, before filing the lawsuit against OpenAI. OpenAI has hit back, arguing Musk’s legal action is driven by jealousy and regret over leaving the company, and that he is seeking to sabotage a leading competitor in the race to AGI.

Public animosity has spilled into viral social media exchanges repeatedly since the suit was filed. Last year, Musk led a consortium offering $97.4 billion to buy OpenAI’s assets, an offer the company rejected, with Altman quipping on X (formerly Twitter) that OpenAI would buy Musk’s platform for a tenth of that price if he was interested. Musk responded by calling Altman “Swindler”, and most recently rebranded him “Scam Altman” in a Monday post on the platform. Legal observers have noted that Musk’s repeated failed attempts to acquire OpenAI have cast doubt on his stated motives for the lawsuit.

A nine-person jury was sworn in on Monday ahead of the trial, overseen by Judge Yvonne Gonzalez Rogers, who has already made clear that the pair’s celebrity, wealth and influence will earn them no special treatment in her Oakland courtroom. Both Musk and Altman are expected to testify, along with Microsoft CEO Satya Nadella, former OpenAI leaders Ilya Sutskever and Mira Murati, and even former OpenAI board member Shivon Zilis, who is mother to four of Musk’s children. Pre-trial procedural wrangling has already produced colorful headlines: the judge barred discussion of Musk’s use of the stimulant colloquially called “rhino ket” in Silicon Valley, and one of Musk’s attorneys has drawn attention for moonlighting as a clown in his spare time.

Microsoft, which has pumped billions into OpenAI as part of a strategic partnership, denies any wrongdoing, and Musk is demanding the return of billions in alleged “wrongful gains” to be redirected to OpenAI’s non-profit division, as well as the removal of Altman from his leadership role.

The stakes of the trial extend far beyond the two billionaires, experts say, as the outcome could reshape the competitive landscape for AGI, a technology that is projected to carry enormous global economic and social power. If Musk prevails, he would effectively eliminate one of his biggest rivals in the global AI race, notes Rose Chan Loui, executive director of the Lowell Milken Center for Philanthropy and Nonprofits at UCLA. While Musk has positioned himself as a defender of OpenAI’s original non-profit mission, many observers worry his motives are not neutral, given his own significant stake in xAI.

Sarah Federman, a conflict resolution professor at the University of San Diego, compared the clash to a heavyweight title fight, or a battle between King Kong and Godzilla: two larger-than-life giants whose fight leaves bystanders to navigate the damage they leave behind. “Musk and Altman are so big, so larger than life, and so unrelatable,” she said. “That’s what makes them so delicious to watch as they clash.”

As the public continues to grapple with AI’s rapid integration into daily life, experts say the trial will pull back the curtain on the ambitions and intentions of the two men who have done more than almost any others to bring consumer AI to the global public. Whatever the verdict, the outcome will set a path that the rest of the world will have to live with for decades to come.