China’s healthcare sector is undergoing a radical technological transformation as artificial intelligence and digital solutions emerge to address systemic challenges of overcapacity and uneven resource distribution. The nation’s ambitious digitization push, accelerated by rapid AI advancements, is reshaping medical delivery models across urban and rural areas.

Shanghai-based obstetrician Duan Tao exemplifies this shift through his AI avatar on healthcare application AQ (known as Afu in Chinese), which has amassed over 100 million users. The digital double, trained on textbooks, clinical case studies, and Duan’s social media content totaling more than 10 million data points, provides medical guidance while explicitly avoiding medication prescriptions. Ant Group, AQ’s developer, emphasizes the technology serves as supplementary consultation rather than treatment replacement.

Patient experiences demonstrate the technology’s practical impact. Wang Yifan, a new mother, utilized obstetrician and pediatrician avatars throughout her pregnancy and postpartum period, reducing hospital visits and minimizing infection risks for her infant. “It can reduce the number of questions we need to ask doctors directly,” Wang noted, highlighting the platform’s role as a medical information mediator.

This technological transformation occurs against a backdrop of demographic pressures, with China’s aging population intensifying strain on healthcare infrastructure. Ruby Wang of LINTRIS Health consultancy observes that “urgency drives change” in China’s health technology landscape, where state-industry alignment enables rapid pilot implementation at unprecedented scale.

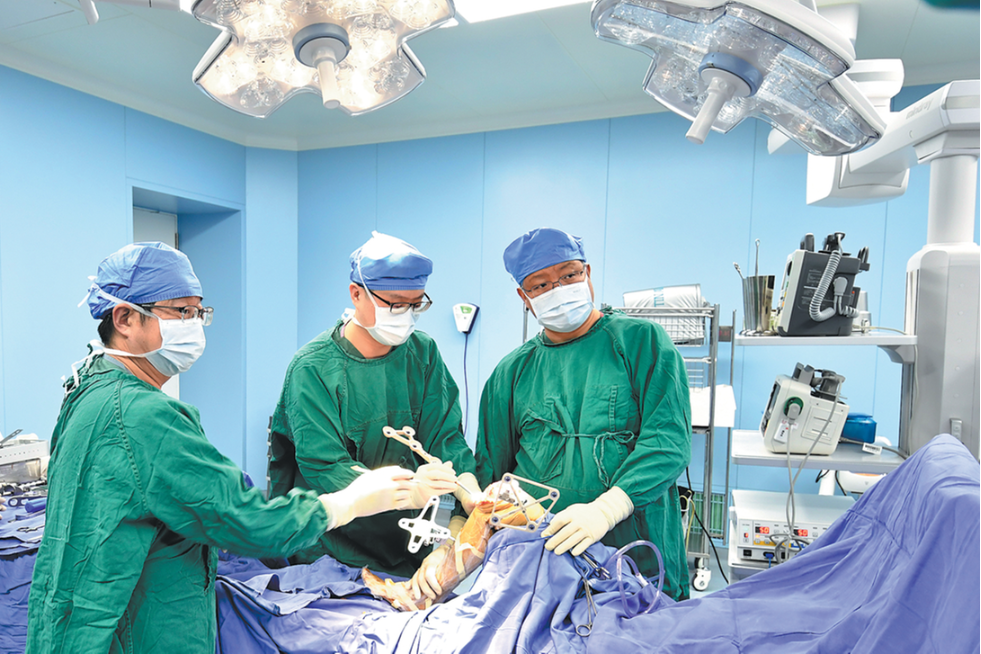

National implementation spans diverse applications: Chatbot DeepSeek operates in hundreds of hospitals, Tsinghua University runs an AI-integrated medical facility, and specialized tools like CardioMind (cardiology diagnostics) and PANDA (pancreatic cancer detection) demonstrate sector-wide adoption. Robotics companies like Fourier supply mechanical rehabilitation arms to rural centers, addressing geographical healthcare disparities.

Despite enthusiastic adoption—evidenced by AI healthcare references in China’s Spring Festival Gala—medical professionals maintain cautious oversight. Dr. Duan emphasizes that “humans must retain the ultimate decision-making and choice,” acknowledging AI’s potential for hallucination. Infectious disease expert Zhang Wenhong warns that overreliance could erode physicians’ diagnostic judgment capabilities without proper training protocols.

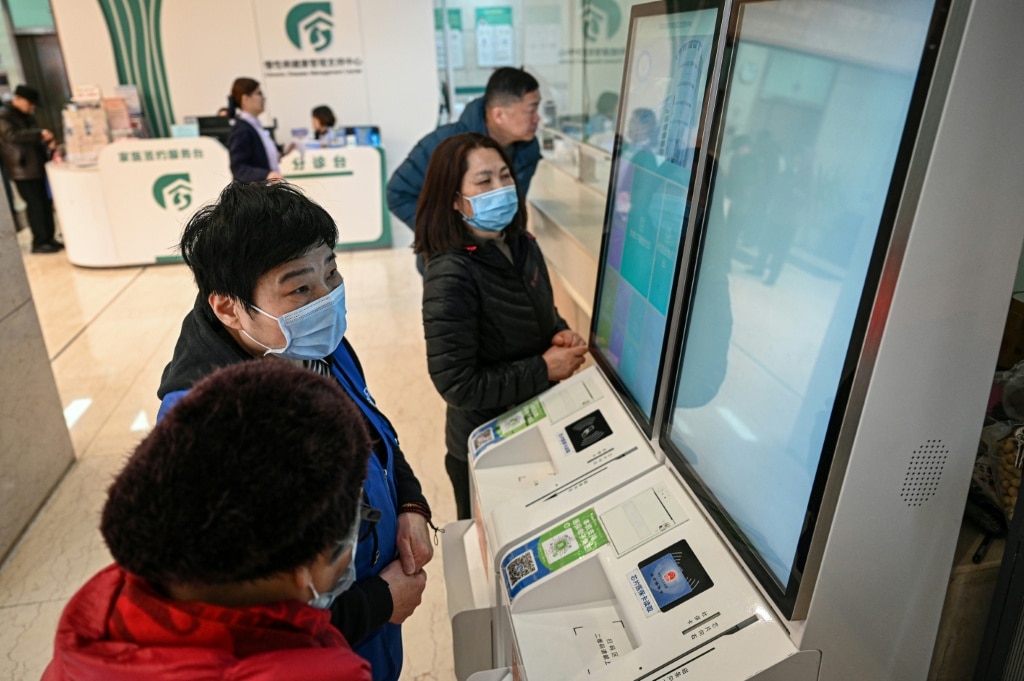

The transformation faces practical challenges, particularly among elderly patients requiring assistance with digital systems. Volunteers like 65-year-old Yan Sulian help bridge this technological gap at Shanghai health centers, teaching older citizens to navigate electronic registration systems and verify AI-generated medical advice through traditional consultations.

As China prepares its 15th Five-Year Plan emphasizing technological transformation, the healthcare sector’s AI integration represents both a practical solution to systemic challenges and a case study in balancing innovation with medical conservatism, where safety remains the paramount concern in this rapidly evolving landscape.